Latest articles

From Generic to Domain-Smart: Tailoring Embeddings for High-Performance Business Search

1. Strategic Context and Challenges Modern answer engines look simple from the outside: a user asks a question, and the system returns a grounded answer. But before the language model can write anything useful, the system must first retrieve the right sources from the enterprise knowledge base. If the right sources are not selected, the answer engine is already limited, no matter how powerful the language model is. In most

Beyond Static Chunking: Multimodal and Adaptive Segmentation for Improved Information Retrieval

Introduction Retrieval-Augmented Generation systems [Li et al., 2025], called RAG, rely heavily on how documents are segmented before indexing. This preprocessing step, known as chunking, directly affects retrieval quality and, consequently, the performance of downstream question-answering systems. In practice, chunking strategies [Jain et al., 2025] hardly take into account the document structure; instead, documents are

Duck: A Customized LSP for Berger-Levrault. Because, why not ?

Introduction The Language Server Protocol (LSP) is a standard that defines how code editors communicate with language-specific servers to provide advanced development features such as autocompletion, go-to-definition, find references, and diagnostics (errors and warnings). Before LSP, each editor had to implement its own support for every programming language, leading to duplicated effort and inconsistent feature

From modeling to optimization : solving the Stock Size Problem with JuMP and its compatible state-of-the-art solvers

Introduction In a context where the supply chain has become a central and structuring component of the Industry 5.0 ecosystem, recent research is shifting toward a new paradigm: Supply Chain 5.0. This paradigm aims to integrate advanced technologies in order to design highly intelligent, adaptive, and sustainable logistics networks. Among the key topics associated with

The future of business software: orchestrating capabilities rather than screens

Introduction For several decades, enterprise software has been shaped by a fragile compromise between technical complexity and user readability. Systems accumulate features, formalise rules, store data, and generate documents, while leaving users to reconstruct meaning and sequences of actions themselves. “There is no silver bullet”, wrote Fred Brooks in a 1986 paper on the inherent limits of software

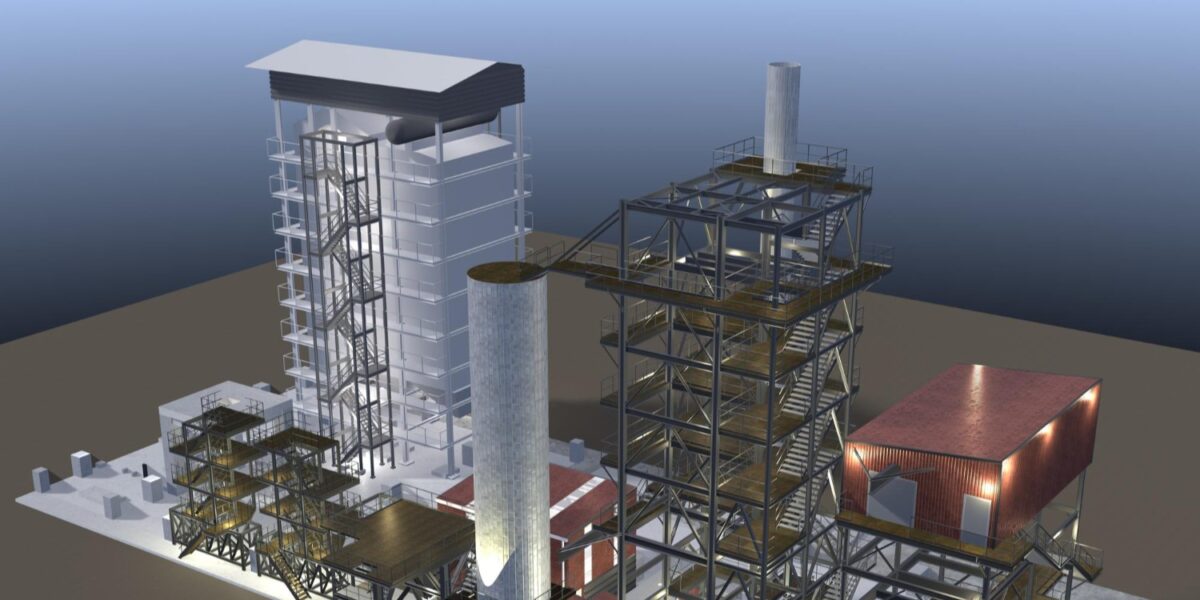

BL-Viz4D: A Digital Twin viewer for Maintenance

BL-VIZ4D is a research project led by Berger-Levrault, dedicated to the visualization, synchronization, and interaction with digital twins applied to maintenance. Stemming from thesis work conducted in partnership with the LIRIS laboratory (Laboratory of Image Informatics and Information Systems), BL-VIZ4D explores the scientific and technological foundations of a multi-scale 3D viewer capable of aggregating heterogeneous

Evaluating the Energy Impact of Design Decisions in Web Applications

Introduction Software systems represent a significant and growing share of global energy consumption. Recent studies estimate that digital technologies account for approximately 6% of worldwide energy usage, with an annual increase of around 6.2%. This growing concern requires organizations, such as Berger-Levrault, to measure and reduce the environmental footprint of their software systems. During software

Yearbook Research & Innovation 2025: Governed, Responsible, and reality-based Research!

We are proud to announce the publication of our 2025 Research & Innovation Yearbook! More than just an annual review, this document marks a structural shift in the way we design and conduct research. Innovation is no longer seen as a simple technological promise, but as a demanding discipline, confronted with the constraints of reality

Angular or React: Which One Consumes Less Energy?

When developers choose a frontend framework, the usual criteria come to mind: performance, ecosystem, maintainability, developer experience, and community support. But there is a factor that is almost never discussed: energy consumption! As software systems grow in scale, the environmental impact of digital technologies is becoming impossible to ignore. While green software engineering research has

Financing Long-Term Innovation: A Strategic Lever for Research at Berger-Levrault

A Strong Commitment to Innovation As a leading industrial company in the field of software and digital services for local authorities and public-sector stakeholders, Berger-Levrault places innovation at the heart of its development strategy. In a context of accelerated digital transformation, anticipating future uses, exploring new technologies, and preparing tomorrow’s solutions have become key challenges

Circular economy and Collaborative Networks: The Future of Asset Management

The global economy is shifting toward sustainability, driven by environmental urgency, regulatory demands, and evolving consumer expectations. Businesses are increasingly adopting circular economy principles, moving away from the linear “take, make, waste” model to systems that extend product lifecycles, minimize waste, and optimize resource use [Rejeb et al., 2025]. This transition aligns with the rise

52nd Euromicro Conference on Software Engineering and Advanced Applications – SEAA 2026 (Krakow – Poland)

Franco-German Dialogue Among AI Industry Leaders (Paris – France)

PMS 2026 – 34th International Conference on Program Comprehension (Toulouse – France)

ICPC – 34th International Conference on Program Comprehension (Rio de Janeiro – Brazil)

CHI 2026 – International conference on Human-Computer Interaction (Barcelona – Spain)

Sophie de Thoré guest on Pari ETI on BFM Business: Innovation, Growth and Digital Transformation

The digital transformation of public and private organisations, business resilience in the face of economic change, and responsible innovation were the focus of discussions on the Pari ETI programme broadcast on BFM Business. On this occasion, Sophie de Thoré spoke on behalf of Berger-Levrault, alongside Jérôme de Lavergnolle (Saint Louis), Jean-Luc Gunéard (LPR) and Augustin

A New Milestone in Building a European AI Ecosystem for Industry

Last Friday marked a significant step forward for the European ecosystem of artificial intelligence applied to industry. After more than a year of close collaboration, the report resulting from the Franco-German dialogue on industrial AI was officially presented to French and German authorities during a dedicated forum held at the Ministry of Economy. This initiative,

Yearbook Research & Innovation 2025: Governed, Responsible, and reality-based Research!

We are proud to announce the publication of our 2025 Research & Innovation Yearbook! More than just an annual review, this document marks a structural shift in the way we design and conduct research. Innovation is no longer seen as a simple technological promise, but as a demanding discipline, confronted with the constraints of reality

Camille Dupré Ph.D. thesis defense: “Pad-based Interaction in Mixed Reality environments”

Thursday 18th December at 2p.m. Paris time, Camille Dupré, Ph.D. Candidate has defended her thesis named “Pad-based Interaction in Mixed Reality environments”. Her thesis defense took place at the LISN, in Gif-sur-Yvette (660 Av. des Sciences Bâtiment, 91190), France. Take a look at the summary below. Summary Mixed Reality (MR) environments integrate virtual elements into

Nihed Bendahman Ph.D. thesis defense: “Evaluation and mitigation of hallucinations in automatic summarization in the specific context of legal documents”

Monday 15th December at 2p.m. Paris time, Nihed Bendahman, Ph.D. Candidate has defended her thesis named “Evaluation and mitigation of hallucinations in automatic summarization in the specific context of legal documents”. Her thesis defense took place at the IRIT Research Laboratory, in Toulouse, France. Take a look at the summary below. Summary Legal monitoring is a

Gabriel Darbord Ph.D. thesis defense: “Automatic test generation to help modernize our applications”

Friday 5th December at 9a.m. Paris time, Gabriel Darbord, Ph.D. Candidate has defended his thesis named “Automatic test generation to help modernize our applications”. His thesis defense took place in Lille, France. Take a look at the summary below. This thesis is fully in line with the partnership between Berger-Levrault and Inria, which aims to

Berger-Levrault strengthens its ties with AI startups!

We are proud to announce that we have joined Hub France IA, the largest association dedicated to artificial intelligence in France. This network now brings together more than 250 members—companies, startups, research laboratories, and institutions—who share the same goal: to accelerate the development and adoption of AI in France and Europe. Getting closer to the

Celebrating New PhDs from the BL.Research Team!

At Berger-Levrault, research is more than a mission—it’s a shared adventure. As the new academic year begins, we are proud to celebrate the success of four of our colleagues from the BL.Research team, who have reached a major milestone in their scientific journeys: the defense of their doctoral theses. These achievements are the result of