What if we could automatically count and detect objects and various pieces of equipment in a building? This would have a tremendous impact on inventory activities, daily inspections, making these tasks easier and faster. This is what we are trying to achieve here. The main idea consists in relying on depth cameras (such ad LiDAR, or Kinect, or even some cameras in very high range smartphone) to scan the environment and detect objects and their features.

In that very ambitious goal, the key technology is Deep Learning. Deep Learning is a type of artificial intelligence that relies on deep neural networks. These techniques have proven to be very efficient in analyzing 2D images and videos. With this project, we try to go further, by applying Deep Learning to a 3D scan of buildings. The scan is obtained by using LiDAR sensors.

LiDAR Dynamic Acquisition Mounted on Mobile Devices

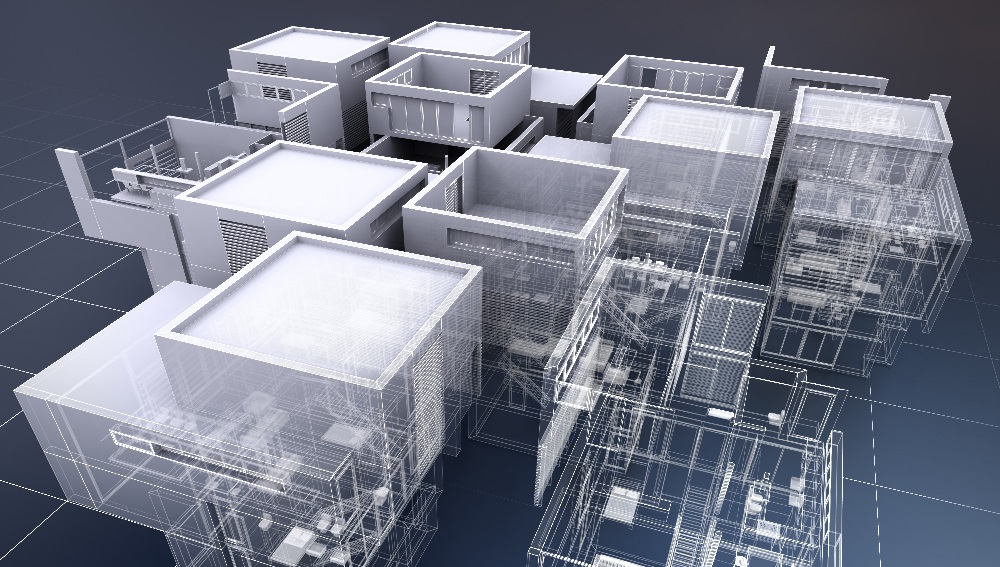

LiDAR sensors are now sufficiently miniaturized to be mobile on indoor environments and can be used to perform dynamic acquisition of an entire scene such as a room, a building, a factory, or an airport. Today, such a capture provides with more 3D spatial context and precision about the depth than a traditional 2D video camera acquisition. From a LiDAR point cloud, it is possible to detect the objects in the 3D scene in order to manage the building or factory facilities. For example, knowing precisely how many extinguishers, chairs, tables, or screens there are, and where they are located would greatly help to update building the equipment databases, finding them and monitoring their status. Thus any indoor objects extracted from the point cloud can be projected to an existing CAD system (Computer-Aided Design) or indoor map system.

Pattern Recognition applied to 3D LiDAR Points Clouds

Pattern recognition has entered an era of complete renewal with the development of deep-learning in the academic community as well as in the industrial world, due to the significant breakthrough these algorithms have achieved in 2D image processing during the last five years. Currently, most of the deep learning research still focuses on 2D imagery with challenges such as ImageNet Large Scale Visual Recognition Challenge (ILSVRC). This sudden boost of performance led to robust and real-time new object detection algorithms like Faster R-CNN (for Recurrent-Convolutional Neural Network, see that video to get an idea of what a Faster R-CNN is capable of).

However, generalizing them to 3D data is not a simple task. This is especially true in the case of 3D point cloud where the information is not structured as in meshes. The goal in this project is to train a neural network so that it can recognize certain objects in a cloud of points acquired inside buildings. This would make it much easier to identify and monitor the furniture.

In our case, the dot cloud comes from a LiDAR acquisition made in the CARL | Berger-Levrault building (Limonest, France). Since we do not have manual annotations, the neural network (a modified version of PointNet) is trained from the Stanford database.

The training task is semantic segmentation, that is, for each point in the cloud, the network will predict a class of belonging.

Figure 1 – Cross-section view of CARL|Berger-Levrault building (Limonest, France) in point cloud, LiDAR report

Figure 2 – A meeting room

Figure 3 – The hall of the building digitized in cloud points

First Results

These first results are promising, in fact despite the uneven lighting and lighting conditions during the acquisition of the point cloud, we manage to very clearly identify the points which belong to the floor, to the chairs, to the tables, to the white boards and on the walls. (see legend below)

| Significant Color | Legend |

| | Wall |

| | Floor |

| | Chair |

| | Board |

| | Table |

Figure 4 – Predictions of the neural network of detected objects in the meeting room

Figure 5 – Predictions of the neural network of detected objects in the hall of the building